👋 I am a master student at Tsinghua University. My research interests span avatar animation, AI agents, AIGC, and embodied AI, especially in areas that have a strong connection to humans.

And would like to realize AGI for the benefit of humanity through generative AI.

My google scholar is here

🎓 I graduated first in my college (rank 1/233) with a B.S. in Software Engineering from Harbin Engineering University. I am now a master’s student at Tsinghua University, supervised by Prof. Ruqi Huang, and expect to graduate in Fall 2027.

🔥 I am actively seeking PhD positions starting Fall 2027 or RA/visiting student opportunities !

My current research focuses on understanding, reasoning, and generation for Avatar/3D/4D/video/animation, and I am particularly interested in spatial intelligence for embodied AI.

💼 I have some good friends (professors) who are recruiting Research Interns, as well as MS/PhD students and undergraduate research interns, and can provide competitive internship salaries or MS/PhD admission offers. If you are interested in 3D / VLM / video / animation generation and understanding, please feel free to contact me!

junhao-c24@mails.tsinghua.edu.cn

🙋♂️ If you are interested in working with me, feel free to drop me an email.

Feel free to contact me by email if you are interested in discussing or collaborating with me.

欢迎优秀的本科/研究生联系科研合作!

😥 Click here to enter emo time !

🔥 News

- 2026.02: 🎉 LottieGPT has been accepted by CVPR 2026! See you in Denver, USA 🇺🇸!

- 2026.02: 🎉 HVG-3D has been accepted by CVPR 2026! See you in Denver, USA 🇺🇸!

- 2026.02: 🎉 Animator-Skeleton-Gen has been accepted by CVPR 2026! See you in Denver, USA 🇺🇸!

- 2026.02: 🎉 From Frames to Sequences released! This work estimates normals and depth from human images.

- 2026.01: 🎉 GarmentGPT has been accepted by ICLR 2026! See you in Rio de Janeiro, Brazil 🇧🇷!

- 2026.01: 🎉 DanceTogether has been accepted by ICLR 2026! See you in Rio de Janeiro, Brazil 🇧🇷!

- 2025.12: 🎉 Ultraman accepted by Machine Vision and Applications !

- 2025.11: 🎉 LLMsPark: accepted by EMNLP 2025, see you at Suzhou, China 🇨🇳!

📝 Publications

🧑🎨 Controllable World Model

LottieGPT: Tokenizing Vector Animation for Autoregressive Generation

Junhao Chen, Kejun Gao, Yuehan Cui, Mingze Sun, Mingjin Chen, Shaohui Wang, Xiaoxiao Long, Fei Ma, Qi Tian, Hao Zhao †, Ruqi Huang †

- Tokenizes Lottie vector animations and finetunes a multimodal model to generate coherent, editable vector animations from text or visual prompts.

HVG-3D: Bridging Real and Simulation Domains for 3D-Conditional Hand-Object Interaction Video Synthesis

Mingjin Chen *, Junhao Chen *, Zhaoxin Fan †, Yujian Lee, Zichen Dang, Lili Wang, Yawen Cui, Lap-Pui Chau † , Yi Wang

- HVG-3D: A 3D-aware HOI video diffusion framework with 3D ControlNet that turns one image plus 3D control signals into spatially precise, temporally coherent interaction videos.

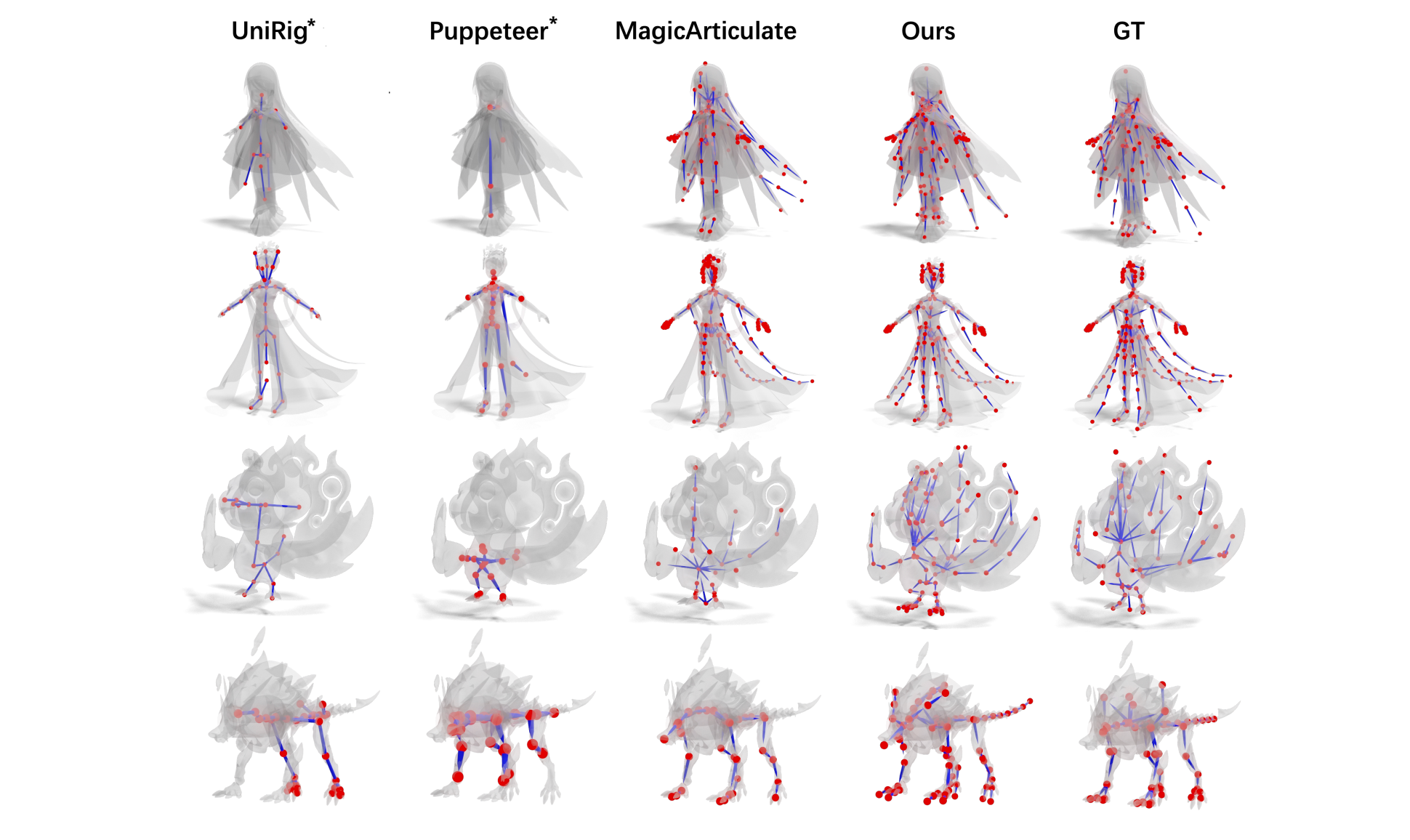

Animator-Centric Skeleton Generation on Objects with Fine-Grained Details

Mingze Sun, Cheng Zeng, Jiansong Pei, Junhao Chen, Chaoyue Song, Shaohui Wang, Tianyuan Chang, Bin Huang, Zijiao Zeng, Ruqi Huang †

- Uses semantic-aware tokenization, a large rigged-mesh corpus, and a density-control module to generate high-quality, controllable skeletons for complex 3D assets.

GarmentGPT: Compositional Garment Pattern Generation via Discrete Latent Tokenization

Fangsheng Weng *, Junhao Chen *, Xiang Li, Jie Qin, Hanzhong Guo, Shaochun Hao, Xiaoguang Han †

- Uses RVQ-VAE tokenization and a VLM generator to produce garment sewing patterns from discrete latent tokens, achieving strong accuracy on large curated datasets.

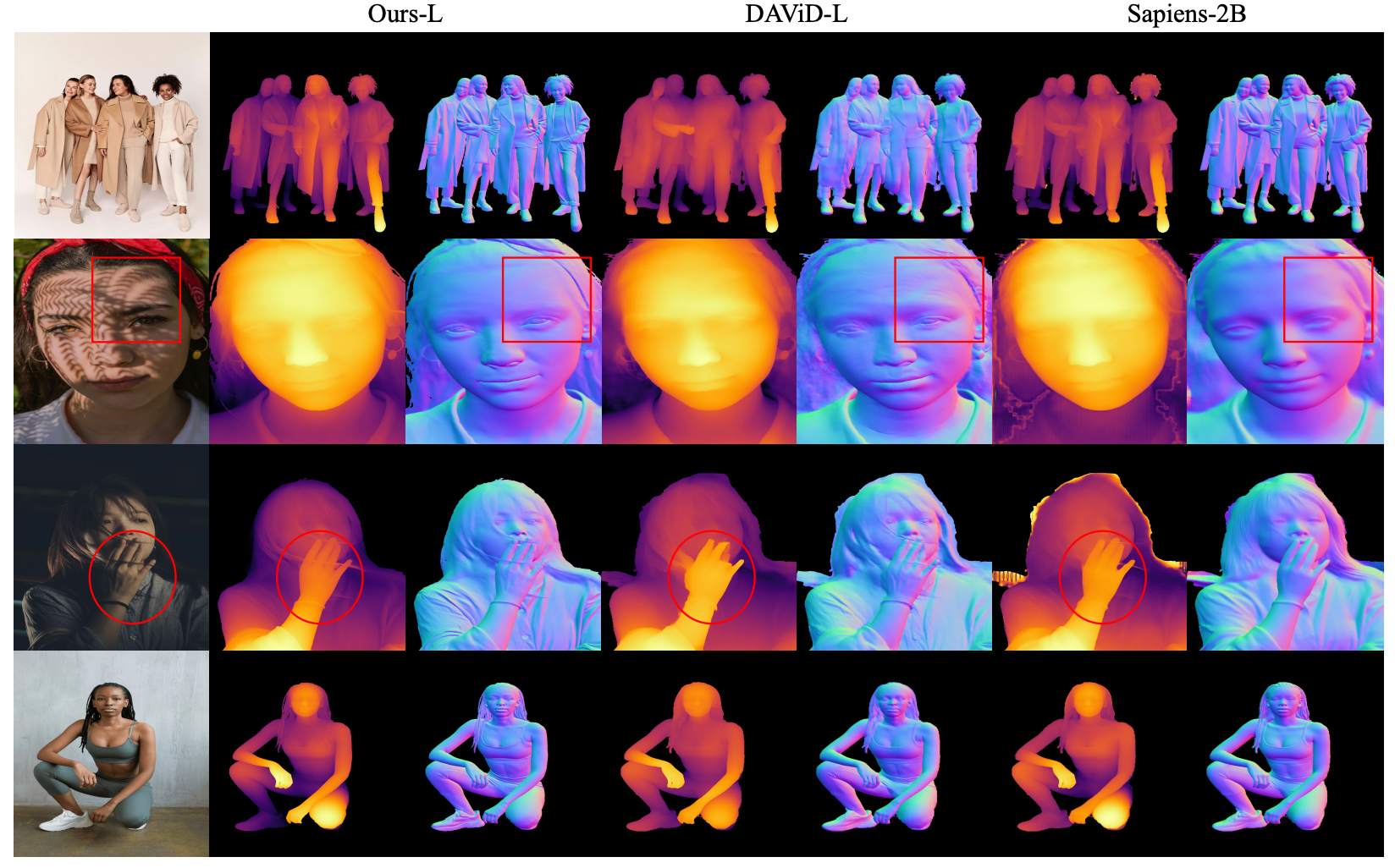

From Frames to Sequences: Temporally Consistent Human-Centric Dense Prediction

Xingyu Miao, Junting Dong †, Qin Zhao, Yuhang Yang, Junhao Chen, Yang Long †

- Learns temporally consistent human-centric segmentation, depth, and normals via synthetic video supervision and a two-stage static→dynamic training pipeline.

DanceTogether! Identity-Preserving Multi-Person Interactive Video Generation

DanceTogether! Identity-Preserving Multi-Person Interactive Video Generation

Junhao Chen, Mingjin Chen, Jianjin Xu, Xiang Li, Junting Dong †, Mingze Sun, Puhua Jiang, Hongxiang Li, Yuhang Yang, Hao Zhao, Xiaoxiao Long, Ruqi Huang †

- This work generates identity-preserving multi-person interactive dance videos with controllable motion and appearance!

DRiVE: Diffusion-based Rigging Empowers Generation of Versatile and Expressive Characters

Mingze Sun *, Junhao Chen *, Junting Dong †, Yurun Chen, Xinyu Jiang, Shiwei Mao, Puhua Jiang, Jingbo Wang, Bo Dai, Ruqi Huang †

- This work generates skeleton and skinning with clothes and hair for 3d gaussian avatar!

Ultraman: Single Image 3D Human Reconstruction with Ultra Speed and Detail

Mingjin Chen *, Junhao Chen *, Huan-ang Gao, Xiaoxue Chen, Zhaoxin Fan, Hao Zhao †

- This work converts a single image of the human body into a lifelike 3D model!

Idea23D: Collaborative LMM Agents Enable 3D Model Generation from Interleaved Multimodal Inputs

Junhao Chen *, Xiang Li *, Xiaojun Ye, Chao Li, Zhaoxin Fan †, Hao Zhao †

- This work enables automated 3D model design and generation for people!

🎙 Multi-modal

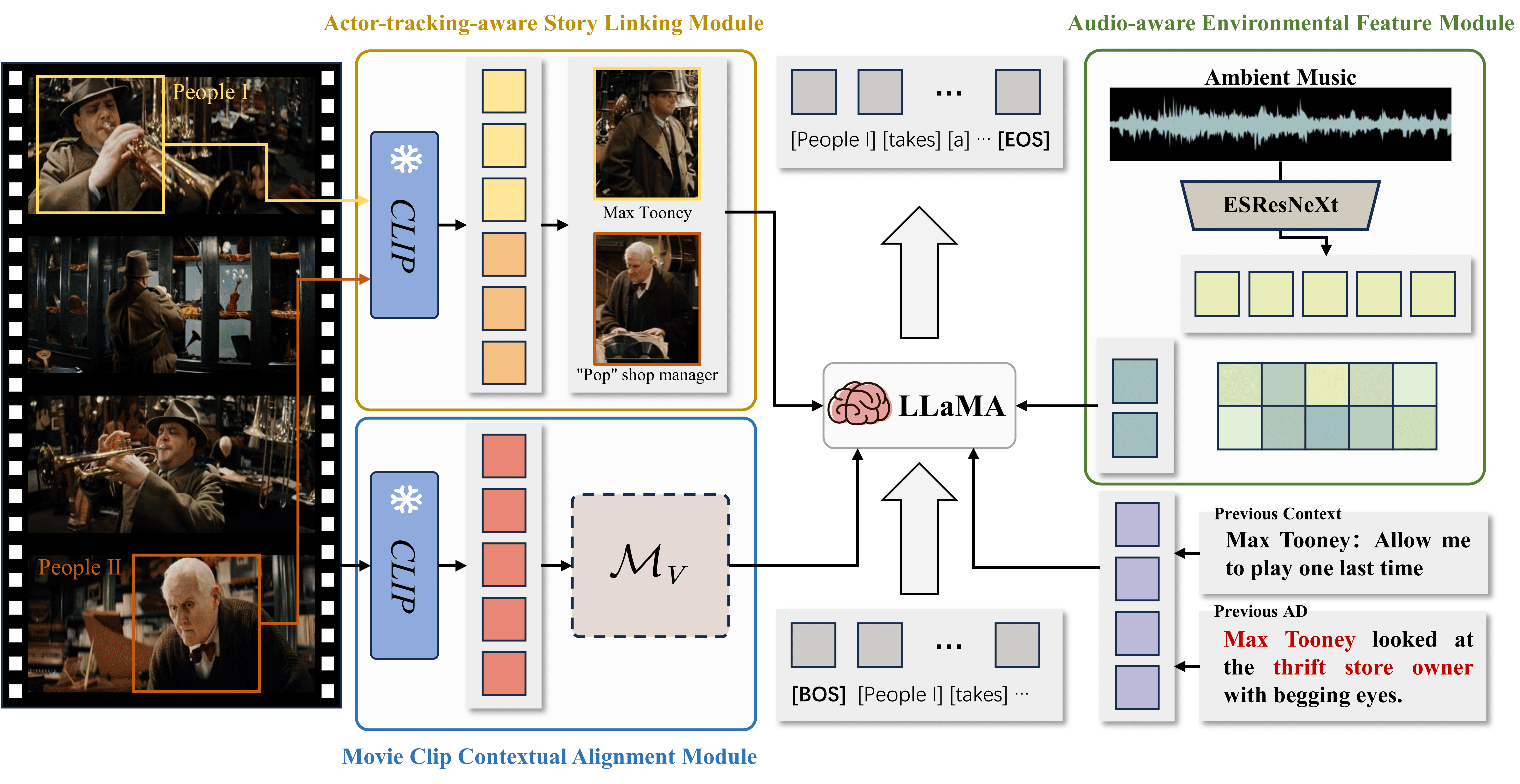

MMAD: Multi-modal Movie Audio Description

Xiaojun Ye, Junhao Chen, Xiang Li, Haidong Xin, Chao Li, Sheng Zhou †, Jiajun Bu

- This work has unlocked a whole new experience of watching movies for the visually impaired.

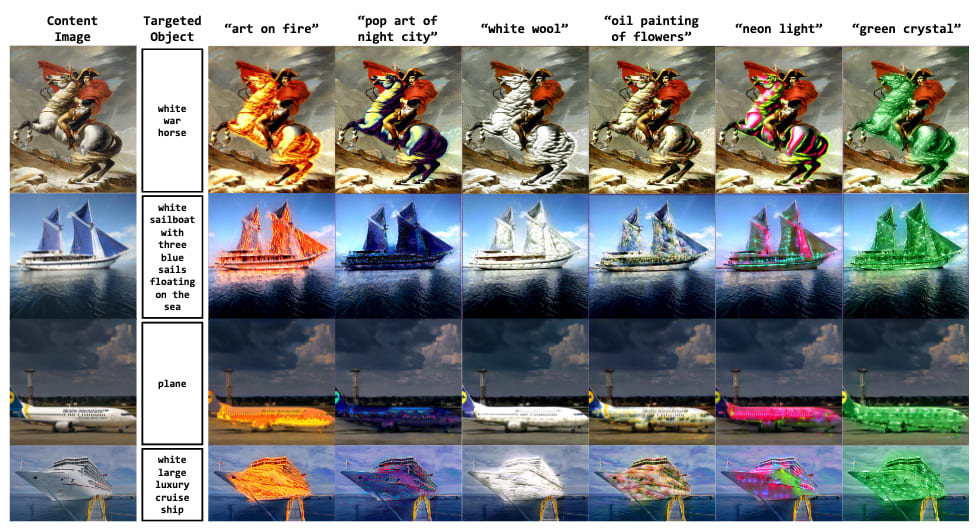

FineStyler: Text-guided Instance-level Fine-grained Image Style Transfer

Junhao Chen, Rong Peng, Xiang Li, Jingbo Sun, Hao Zhao, Ruqi Huang

- This work enables fine-grained stylization of a single image through text-guidance!

👀 Large Language Model

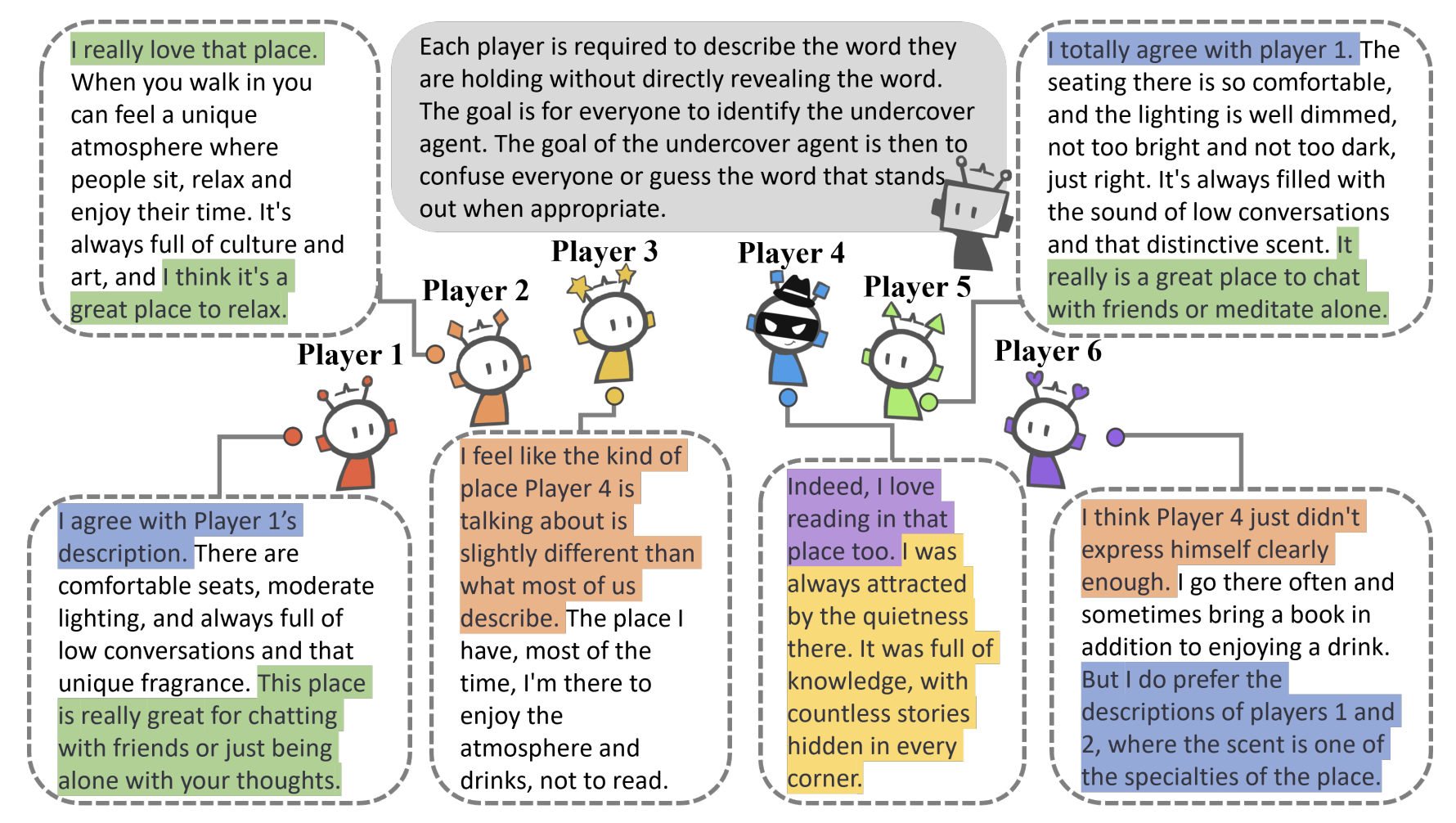

LLMsPark: A Benchmark for Evaluating Large Language Models in Strategic Gaming Contexts

Junhao Chen, Jingbo Sun, Xiang Li, Haidong Xin, Yuhao Xue, Yibin Xu, Hao Zhao †

- This work evaluates LLMs through a game-theoretic framework.

IW-Bench: Evaluating Large Multimodal Models for Converting Image-to-Web

Hongcheng Guo, Wei Zhang, Junhao Chen, Yaonan Gu, Jian Yang, Junjia Du, Shaosheng Cao, Binyuan Hui, Tianyu Liu, Jianxin Ma, Chang Zhou, Zhoujun Li

- This work is a benchmark for evaluating MLLM image-2-html code generation capabilities.

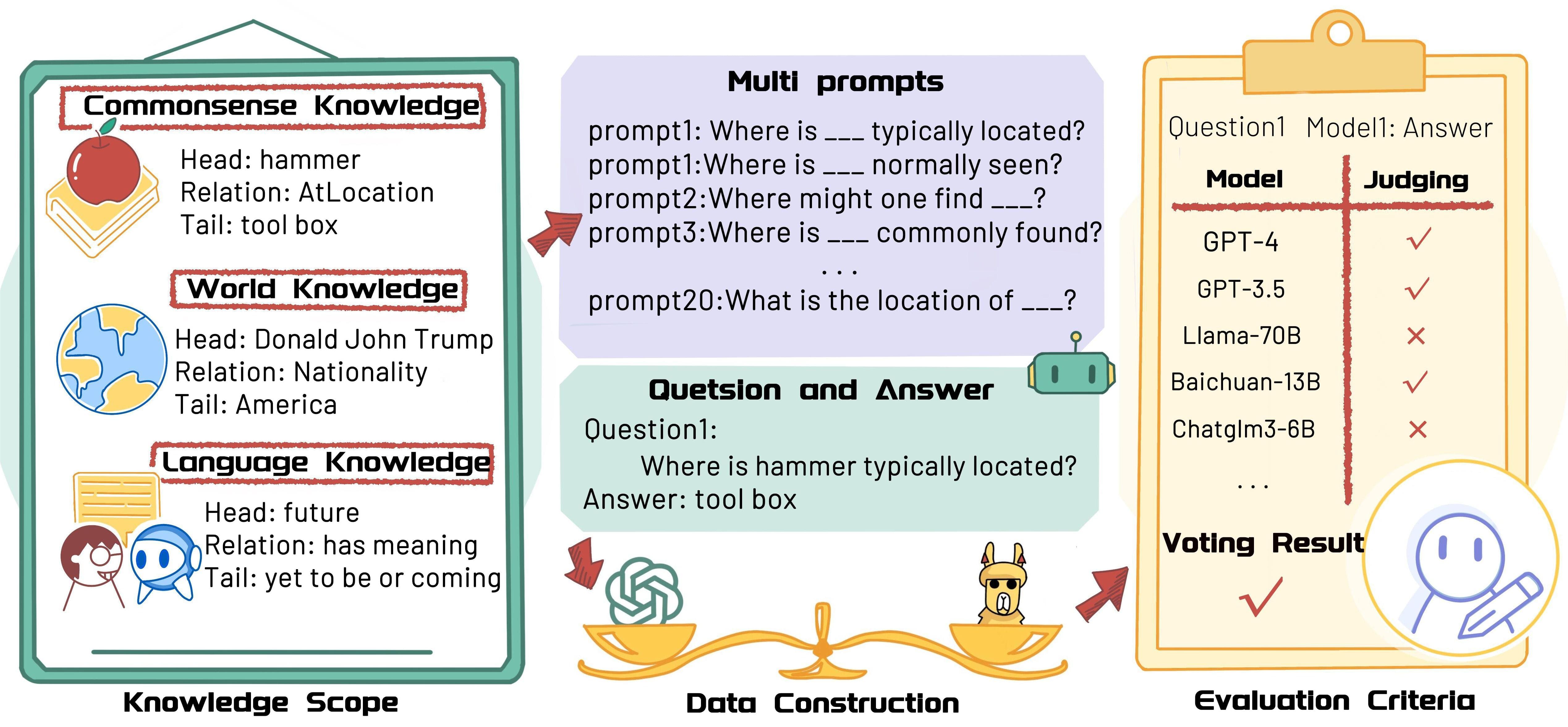

ZhuJiu: A Multi-dimensional, Multi-faceted Chinese Benchmark for Large Language Models

Baoli Zhang, Haining Xie, Pengfan Du, Junhao Chen, Pengfei Cao, Yubo Chen †, Shengping Liu, Kang Liu, Jun Zhao

- This work serves as a benchmark for evaluating the Chinese language capabilities of large language models.

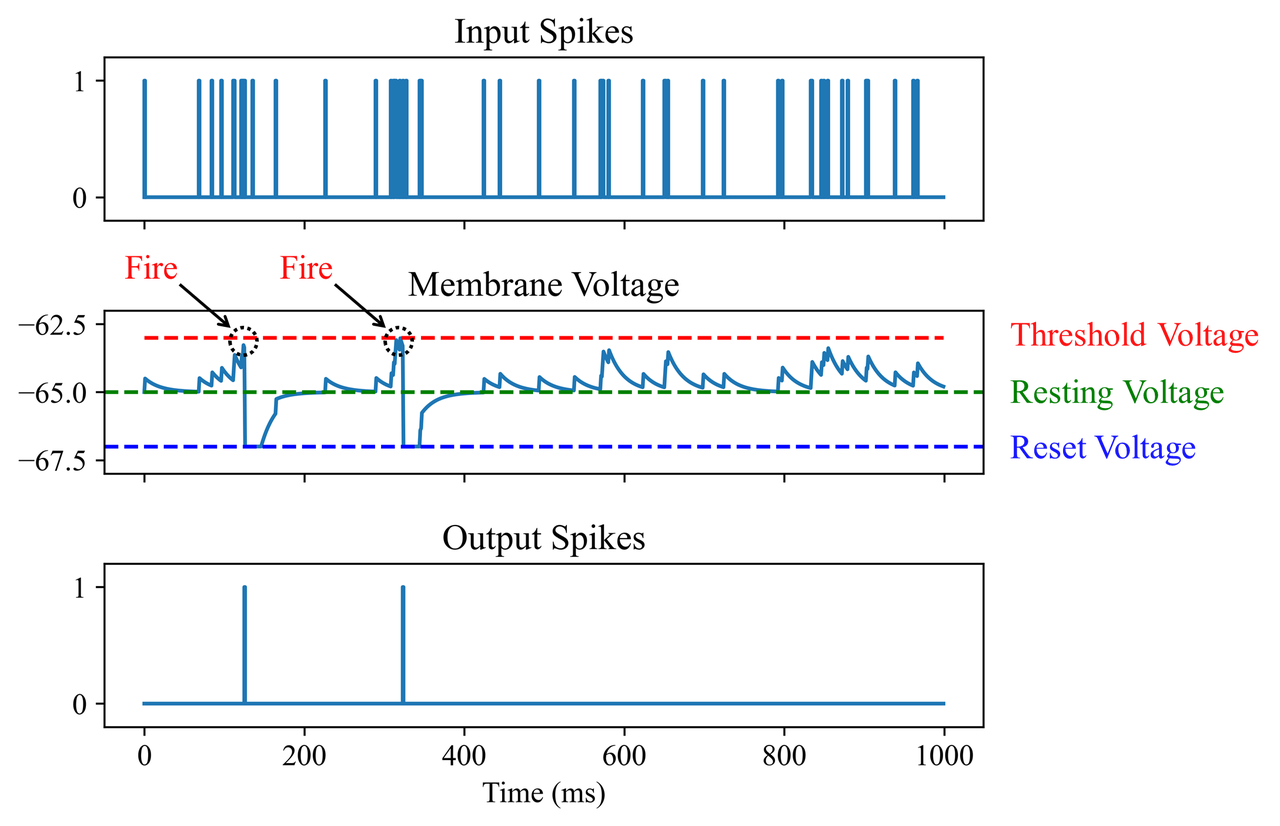

Towards Energy-Efficient Sentiment Classification with Spiking Neural Networks

Junhao Chen, Xiaojun Ye, Jingbo Sun, Chao Li †

- This work applies a pulsed neural network to a natural language sentiment categorization task, reaching the leading edge in terms of energy consumption.

🎖 Honors and Awards

Innovation and Entrepreneurship Competition Award Cumulative Awards National *10, Provincial *45, School-level *11, totaling 66.

Honors awards cumulative awards national *6, provincial *2, school-level *20, a total of 28.

Competition awards and individual honors total 94 (as of 11, 18, 2024).

List of all awards received.

📖 Educations

- 2024.08 - 2027.06, M.Eng. in Data Science @ Tsinghua University, Shenzhen.

- 2020.09 - 2024.06, B.Eng. in Software Engineering (rank 1 / 223) @ Harbin Engineering University, Harbin.

💻 Experiences

- 2025.01 - 2025.05, Research Intern, Shanghai AI Lab, Shanghai.

- 2024.09 - 2025.01, Research Intern, AIGrowthGroup@TAL Education Group, Beijing.

- 2024.06 - 2024.08, Research Intern, Lightillusions, Shenzhen.

- 2023.08 - 2024.05, Research Intern, DISCOVERLab@Institute for AI Industry Research (AIR), Tsinghua University, Wuxi.

- 2023.04 - 2023.08, Research Intern, National Lab of Pattern Recognition@Institute of Automation, Chinese Academy of Sciences, Beijing.

🧑💻 Professional Services

Reviewer@ACL ARR (2025.02 - now), ICLR (2026), AAAI (2026), ICML (2026), ICME (2025-2026), ICMR (2025-2026)